Hi! 👋 Welcome to The Big Y!

Objectively, one of the biggest problems for LLMs is that they often are confidently incorrect. The consequences are wide ranging but as we saw in last week’s bottom tidbit, ChatGPT is being used in the real world and it can do real harm. An adjacent story that has been making the rounds this week highlights how the National Eating Disorder Association had to shut down their chatbot after it started giving advice that encouraged the very eating disorders that the association is trying to prevent.

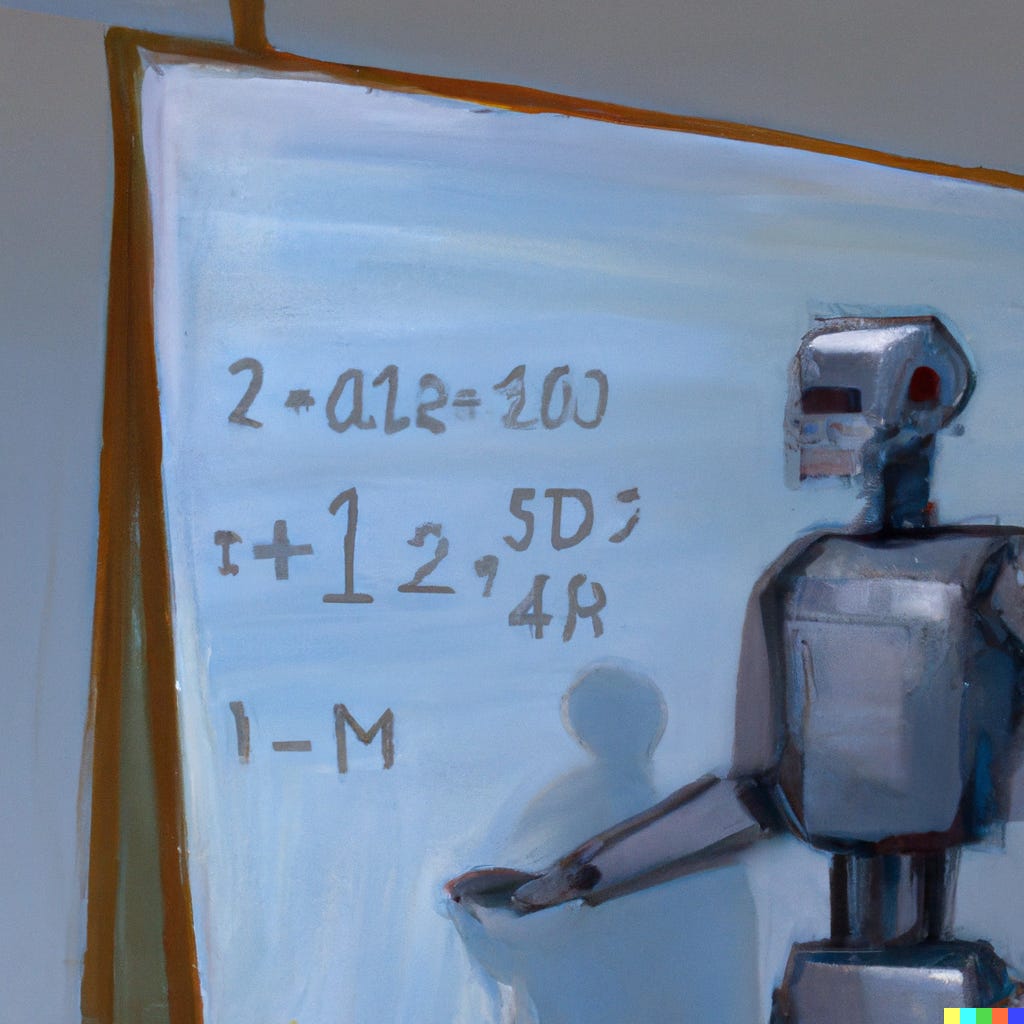

Hallucinations, which now has its own Wikipedia page, is an obscure term to describe an LLM’s statistical calculation being off when calculating the certainty of its output. A new preprint paper has come out detailing a method that could potentially reduce wrong answers given by LLMs.

At a very high level, this paper details an approach where the model would self-check its generative output by estimating the quality of these outputs. The approach extends the commonly used Monte Carlo algorithms enhanced with probabilistic program proposals to create this new approach called SMCP3. SMCP3 enhances the ability of the algorithm to guess potential explanations of its data and then estimate the quality of these explanations at an improved level compared to the current approach for LLMs.

I think we’ll continue to see more tools and techniques that try to tackle the problem of wrong answers and mistakes earlier in the LLM development process, rather than as a check at the end application deployment.

The AI space is obviously moving quickly, with new players coming onto the scene regularly and it’s hard to keep up and stay relevant. With a quick rise to the top, this new profile digs a bit deeper into Stability AI’s founder and their challenges (including challenges raising a next round).

Know someone who might enjoy this newsletter? Share it with them and help spread the word!

Thanks for reading! Have a great week! 😁

🎙 The Big Y Podcast: Listen on Spotify, Apple Podcasts, Stitcher, Substack