Hi! 👋 Welcome to the Big Y!

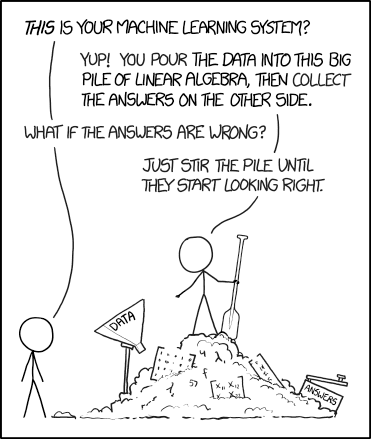

Real-world data is biased and it could be that the data you're using doesn't represent reality or that there are underlying prejudices already reflected in the data, or perhaps the attributes you selected during the training process are unintentionally impacting the model's bias.

Deep learning algorithms pop up across our society, everywhere from our social media timelines, retail ads, and search engine outputs, but also in our judicial system, airport security, surveillance, and erosion of privacy through facial recognition. When these models are biased, at best your ad won't be perfectly tailored but much worse, you could be sentenced to a much harsher criminal sentence because of your race, which is a big deal.

The solution to this problem is not straightforward, but we need to start somewhere. Systemic racism and bias are prevalent in all aspects of society, so it is no surprise it shows up in our machine learning models as well. One solution is to use synthetic data to generate data sets that are reflective of a world as we would like it to be, but we would need to overcome and recognize our own faults for this to work. We run the risk of having the same problems if we don't address the fundamental issues. Another current proposed solution is to use other algorithms to point out biases that have been learned by the model (examples: 1, 2, 3), for example, IBM has an open-source toolbox, AI Fairness 360.

Our understanding and accountability are part of the solution. Here are some illuminating articles:

Technology perpetuating racism (Paywall: MIT Tech Review)

It is important to remember that these are only the instances we know about - what about the unknown unknowns?

Thanks for reading! Share this with a friend if you think they'd like it too. Have a great week! 😁